Tech 4 Tots

20/20 Hindsight Dept.

You don’t think of yourself a luddite I’ll wager. Always up on the lastest innovations from robot vacuum cleaners to…robotic teachers?

In 1961, British science fiction writer Arthur C. Clarke the "Prophet of the Space Age"formulated three adages that are known as Clarke's three laws. The one that seemed to resonate most deeply in the imagination: “Any sufficiently advanced technology is indistinguishable from magic.”[2]

It was easy to convince the uninitiated that computers, in a very literal sense, were magic. Just the idea of replacing heavy mechanical typewriters with a paper-free way of editing words on screen was compelling to everyone. I didn’t realize it at the time, but an Iowan was responsible for inventing the microprocessor, the beating heart of every computer.

Clarke’s futuristic literary musings became actualities thanks to Grinnell, Iowa native and college professor Robert Norton Noyce (December 12, 1927 – June 3, 1990). It was Noyce’s brainchild, the Integrated Circuit, which transformed sand-to-silicon and Noyce himself into the original de facto Mayor of Silicon Valley.)

He was credited with founding both Intel, and Fairchild Semiconductor in 1957. (I remember clearly my mother(!) sitting me down to read Fairchild’s Annual Report which arrived in the mail telling me: “son, this is the future and you should know about it.”)

Clark’s quip about technology appearing as magic was certainly true. The operative word in Clark’s statement was “indistinguishable.” To the uninitiated, computers did appear as objects from another reality. Computers amplified and augmented human abilities to such an extent that they seemed to bestow magic powers on those within the digital ‘priesthood.’

When folks got a look at the wonderous range of tasks a computer could handle, a gold rush of sorts began. More and more industries and services jumped onto the silicon stagecoach seeking to grab some of that magic for themselves. Kinda like AI today.

============================================================================

In the computer gold rush of the 1990’s, there were few counseling caution, however soon after the dawn of the World Wide Web otherwise known as the ‘consumer’ Internet, we saw clouds gathering on the horizon.

I was, like many others, enamored with the ease with which I could “cut, copy, paste and print” my words, images and music. All Accomplished with the ease that present-day users might summon ChatGPT for advice.

It wasn’t until later that potential societal- as well as industry-killing aspects of this new technology became glaringly apparent. Email, the “killer app” which brought us all together, soon threatened to fray our culture as cyber-bullies could reach out, not to inform but to do mischief.

Ya’ll may have heard of AI music threatening our artistic lives today but in 1996 an app called Napster fired the first shot across the bow of independent artists wanting to retain control of their creative output. (As the band Metallica came to find out)

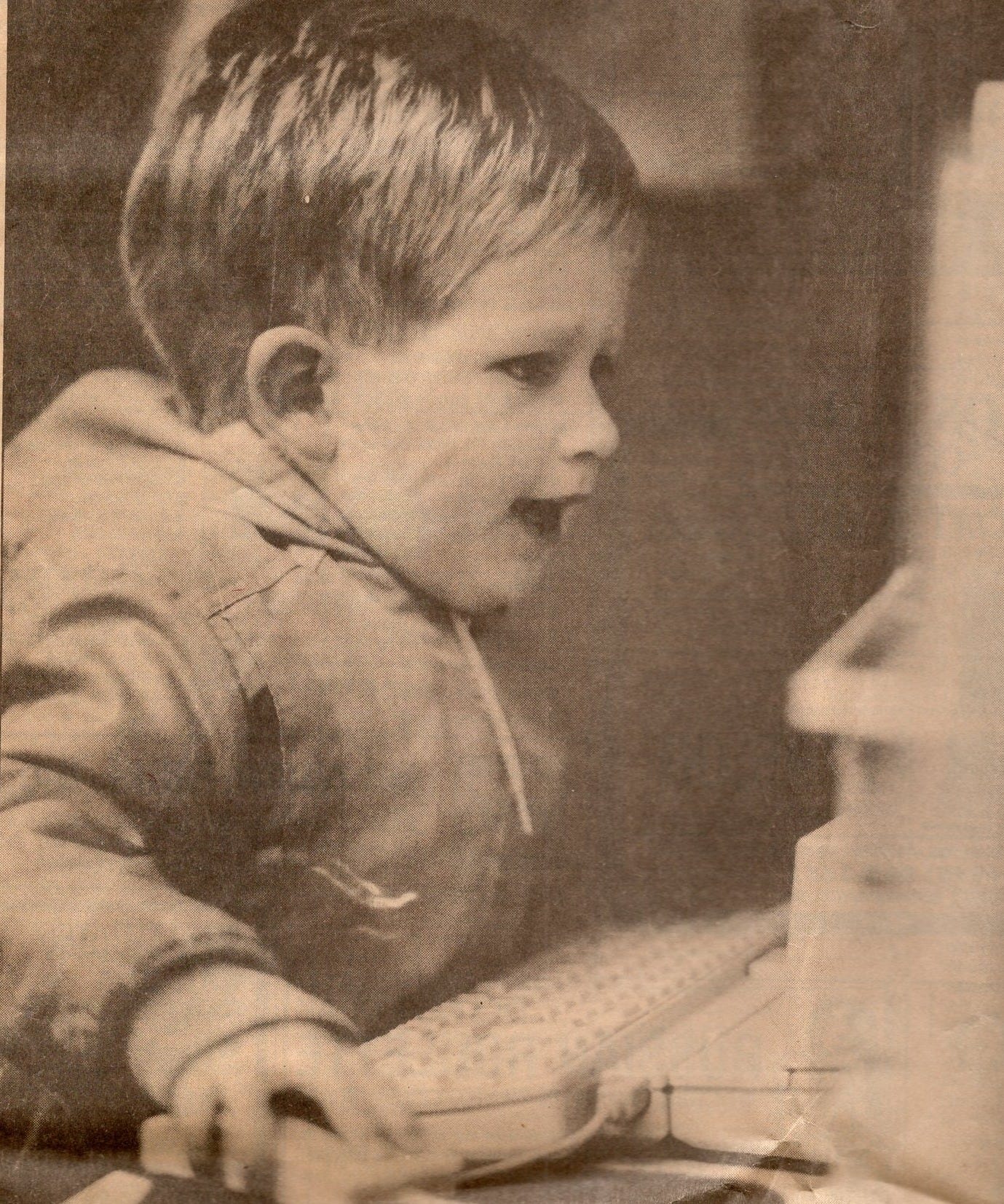

Apple Computer Inc.’s early reputation (and sales) were bolstered by the fact that they practically gave their products (Apple ][) to schools around the nation. Hoping ostensibly to get the education community “hooked” on their products.

(Full disclosure: From 1996 until 2000 I was a member of Apple Computer’s Partners-In-Education team. Teaching teachers how to utilize these tools, like teaching the fundamentals of jazz improvisation, was one of the great thrills of my life…..until The Internet ‘ruined’ everything. (slight hyperbole here)

Before the Internet, teachers had fast stand-alone computers loaded with increasingly capable applications. Computer-savvy educators could now bring words, still images, sounds/music and video to the process of learning. It was pretty close to sublime if you were an adventurous educator. Huge fun.

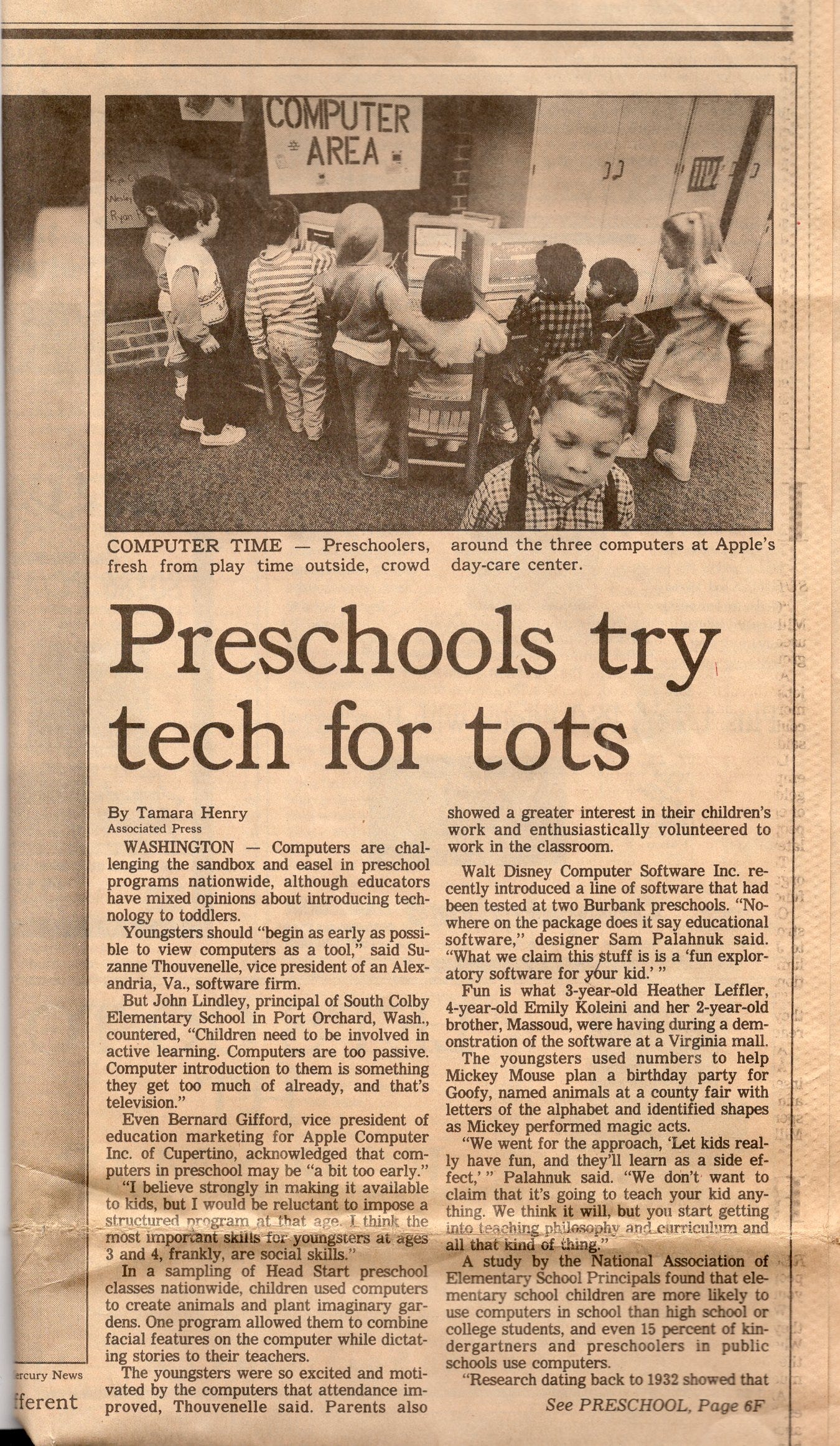

However, once they affixed that ethernet port onto the motherboard of the computer, the rest of the world was now allowed into what had formerly been a private, localized educational computing space.

============================================================================

We were so busy having TechFun in the 90’s, that most folks didn’t notice the dark clouds on the horizon. Napster, an ethical trojan horse, was handing its users unparalleled convenience, while simultaneously enabling the theft of the intellectual property of copyright holders including musicians, visual artists and many more.

Because we failed to instill a sense of ethics commensurate with the power now in the hands of users, a new reality embodied in the phrase: “content wants to be free” took hold.

Computer nerds, long on talent but short on ethics, came up with a smug pirate manifesto that declared: Because I can freely use the Internet to access (art/music/images/writing/content) it should be free for me to access/resell/take credit for it/ with no compensation whatsoever to the original creators.

The guys at Napster and other techies apparently felt as if they were beyond the reach of ethics (they promptly showered tech-illiterate lawmakers with campaign ca$h to bend the still-fresh laws to their advantage.) The World Wide Web was the new, “magical” medium which they felt shouldn’t be hindered by “old fashioned” ideas concerning the ownership of intellectual property. (Of course when it came to their ideas being infringed, the high tech boys were always ready to go to legal war to protect their patents and intellectual property.)

For a well-researched account of this process, refer to this book by Robert Levine.

…which leads us to this moment.

As a society now struggling with mental health/legal/and political issues our failures to rein in Silicon Valley’s excesses loom larger every day. (looking hard at you Facebook). As a result, our children face challenges that could not’ve been imagined back in 1990 when we first ventured awestruck down the road to Silicon nirvana.

I’m sure Robert Noyce was not thinking of how to exploit the minds (and buying habits) of middle- and high schools students when he first transformed sand into silicon. He did, however unleash a new medium which would, in short order, affect everyone from Cold War strategists to elementary school teachers.

Could he have imagined that his cute little baby technology would in 70 years morph into a beast threatening to dissolve the remaining vestiges of our honorable relationships with each other?

In 1998, I suggested to the Head Master of a tech-savvy college prep school that we should institute a course called Digital Morality (101). My suggestion was met with a quizzical look as if to say “why would we need to do something like that?”

[End of Part One]

Support The Iowa Writers’ Collaborative

My Integrated Life Begins Here: Episode One

Well said all around Dartanyan. So much more to say but I'll just comment that I agree with Robert and I would call it FI for Fake Information. Which we should all be able to agree is not a good thing to follow.

As we've seen in stereophonic sound and frightening color, AI can be blessing or curse. Problem for me is in the name itself: artificial. If it's, say, 'Assisted' or 'Conducive' that's great. Artificial? I'll pass.